How to use Azure ML registries to share models, components, and environments

Created:

Topic: Azure ML: from beginner to pro

Introduction

We all want to be more productive in our jobs. And it’s no secret that one of the most effective ways for ML engineers and data scientists to be productive is by reusing and sharing our code. With the recent announcement of Azure ML registries, reusing code has become so much easier! Registries allow us to share Azure ML assets (models, components, and environments) across all users in an organization, opening up many scenarios that will help us be more efficient. Here are some examples:

-

We can create workspaces for development, testing, and production and have our assets flow seamlessly from one to the next. For instance, we can train our model with anonymized data in our development workspace, and then adjust it to incorporate the full dataset in our production workspace. Registries enable us to easily use the same model in both workspaces, which simplifies the before and after comparison, and helps us to quickly detect any issues.

-

We can more easily reuse code written by our coworkers, and they can more easily reuse code written by us. For example, suppose that I refactor code that I think may be useful in future projects, and register it as a component in an organization-wide registry. A coworker on another team is now able to easily use my code, instead of writing it from scratch.

-

Everyone using Azure ML now has access to certain models, components, and environments that Microsoft ships in the “azureml” public registry. For example, we can now use Responsible AI components directly from that registry, instead of having to use a script to install those as we did before.

The advantages of registries are endless, and I can’t wait to show you how easy it is to use this feature.

The project associated with this post can be found on GitHub, so make sure you open it and follow along!

Training and inference on your development machine

As always, we’ll start by training and testing our model on our development machine (which could be your actual physical machine or a remote machine you use for development). I’ll keep including this section in all of my blog posts, because I can’t stress enough how much more productive you’ll be if you polish your model before moving it to the cloud! You can train the PyTorch model in this project by selecting the “Train locally” run configuration in VS Code, and pressing F5. Training should take only a few minutes for our simple Fashion MNIST model. Our code uses MLflow for logging, so when training is done we can execute the following command to look at the logs:

mlflow ui

Clicking on the link provided opens a new page with all the logs. If you look at the metrics section, you should see that training and validation accuracy are around 85-86%. You can then test your model by running the “Test locally” run configuration, which should print a similar test accuracy. In this scenario, because we’re using a small dataset (60,000 training images and 10,000 test images), we’re able to use the full dataset when running locally. If you have a much larger dataset, you may have to use a subset.

You can see all the PyTorch code used to train and test the model in the src directory of our project.

Creating a registry

Once we’re confident about our trained model, we’re ready to train and deploy it in the cloud. I’ll be using registries to share my work as I move it to the cloud, so my first step is to create the registry itself.

But what exactly is a registry? It’s an organization-wide repository of Azure ML assets. Let’s look at this definition in more detail:

- By “organization-wide,” we mean that you can control the permissions to your registry resource the same way that you can control permissions to any other Azure resource. Anyone with access to the registry has access to all the Azure ML assets you add to it.

- When we refer to “Azure ML assets,” we mean models, environments, components, and data. Currently, Azure ML only supports adding models, environments, and components, though the team will consider adding support for data assets in the future.

Registries can be created like any other Azure ML resource: by using the UI in the Azure ML studio, by writing code using the Python SDK, or by using YAML files and commands in the CLI. We’ll use the third method in this post. Let’s start by looking at registry.yml, the YAML configuration file we’ll be using to create the registry:

name: registry-demo

tags:

description: A demo registry to show how to use the Azure ML registry.

location: westus2

replication_locations:

- location: westus2

storage_config:

storage_account_hns: False

storage_account_type: Standard_LRS

- location: eastus2

storage_config:

storage_account_hns: False

storage_account_type: Standard_LRS

We named our registry “registry-demo” and added a description, which will show up in the portal once the registry is created. We also specified a primary region (under “location”), and a set of optional secondary regions (under “replication_locations”) with extra configuration. Notice that the primary location is repeated in the replication section. Only workspaces in these regions will be able to share assets with the registry — the primary region can’t be changed after the registry is created, but the secondary regions can be updated later.

The “storage_config” optional section enables us to define the configuration for the type of storage account we want in each region. You can decide what type of storage is right for you by reading this documentation page, and based on that decision, you can figure out what to set “storage_account_type” to in this page. In my scenario, I want locally redundant blob storage, so I chose the “Standard_LRS” storage type. I also set “storage_account_hns” to False because I specifically want “Azure Blob Storage” — if I wanted “Azure Data Lake Storage Gen2,” I would have set it to True. You can read more details about the settings in this YAML file in the documentation.

We can now create the registry in the cloud with the following command:

az ml registry create --file registry.yml

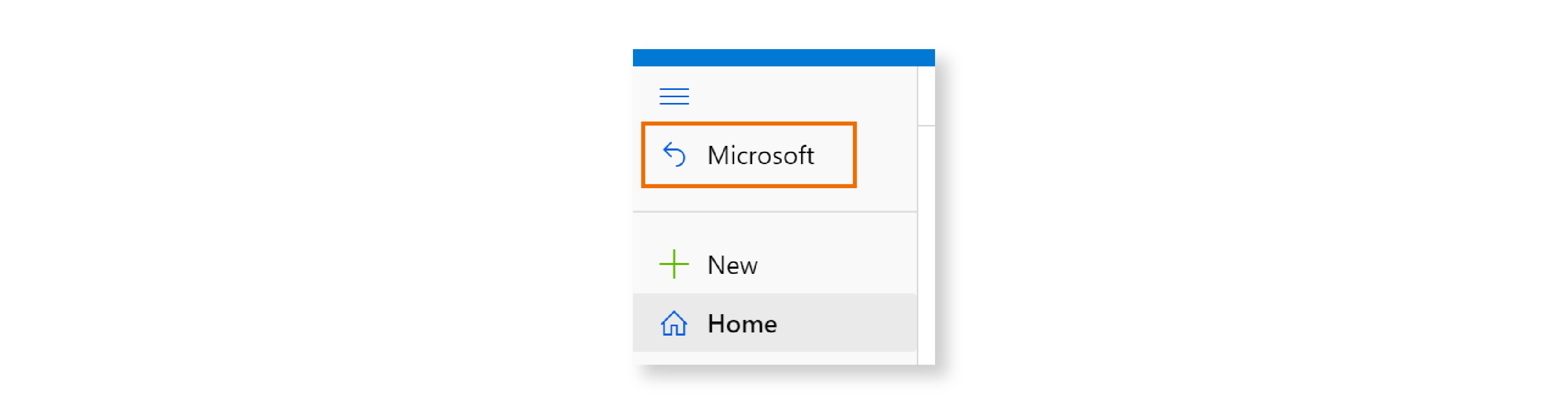

Let’s verify that the registry was created. Go to the Azure ML Studio, and click on “Microsoft” at the top of the left navigation.

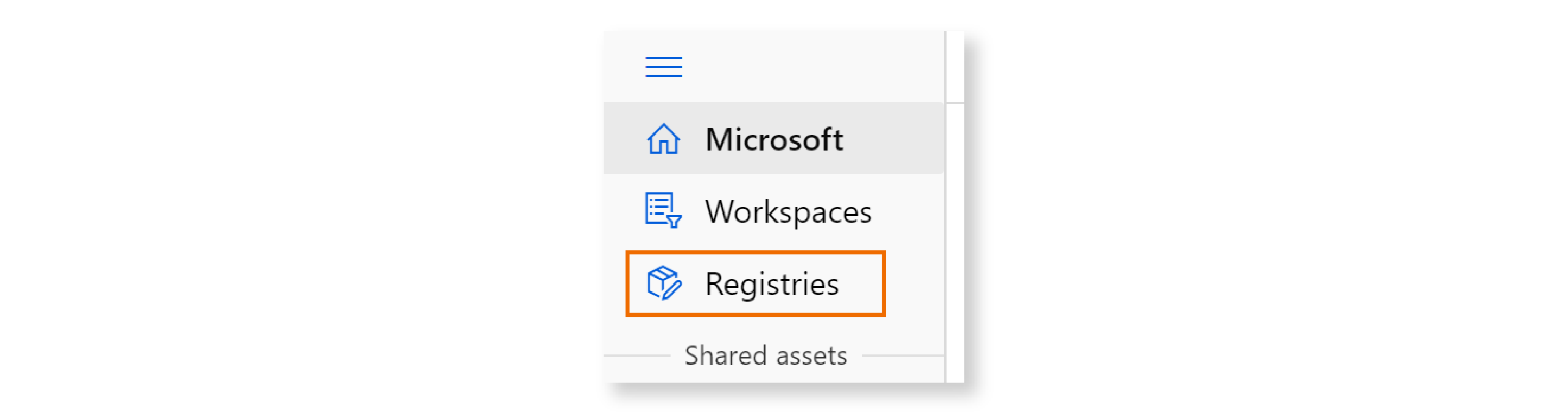

Then click on “Registries”:

You should see the registry you created listed on this page:

Great! We now have a registry, and can add assets to it. But before we do that, let’s think about who should have access to the registry. In this scenario, my goal is to share a few assets I’m creating with my colleague Seth Juarez, so I need to give him read access. In order to do that, I can go to the Azure portal, search for “registry-demo” (the name of our registry resource), and click on it. Then on the left navigation, I can click on “Access control (IAM),” then on “Add” in the top menu, and then on “Add role assignment.” I can then select the “Reader” role, click “Next,” click on “Select members,” enter Seth’s email, and then click on “Review + assign.” I can verify that Seth does indeed have read access to this registry under the “Role assignments” tab.

I now have a registry created on Azure ML, and Seth has reader access to it. If I want to share any assets with him, I can just add them to the registry.

Registering an environment and components in the registry

Training and deploying a model in the cloud requires the creation of several Azure ML entities. Here’s the complete list of entities we need for this project:

- an environment — the software we want installed on the VM

- two components — components are reusable pieces of code, and we need one for our training code and another for our test code

- a compute cluster — the hardware specification of the VM we want to use

- a dataset — a cloud copy of our data

- a pipeline — which connects the components and runs them in the cloud

- a managed online endpoint — which will allow us to query the trained model in a scalable way

- a deployment — which contains the configuration for the endpoint

There are two types of entities in Azure ML:

- Assets - These are workspace agnostic, and include models, components, environments, and data. Azure ML currently supports sharing models, components, and environments across workspaces, through the use of registries.

- Resources - These are specific to a particular workspace, and include endpoints, deployments, jobs, and compute. For example, the scoring URI of an endpoint is tied to its workspace, and a job/pipeline runs for some amount of time in its workspace. These entities can’t be shared across workspaces.

In this section, we’ll create the environment and components for our project. My coworker Seth would like to reuse those assets in his project, so I will share them with Seth by registering them in our common registry. I already have YAML configuration files for them in the GitHub project, so I can just execute the following simple CLI commands:

az ml environment create --file environment-train.yml --registry-name registry-demo

az ml component create --file train.yml --registry-name registry-demo

az ml component create --file test.yml --registry-name registry-demo

Notice that in order to register these assets in my registry, I just need to add --registry-name registry-demo to the command that creates them. If I had omitted that portion of the command, the assets would have been registered in my workspace. Pretty easy.

I won’t go over the contents of these YAML files here because I’ve already covered them in detail in previous posts. If you want to delve deeper, I recommend that you read my blog post on environments and my blog post on components. However, I will point out that the YAML for my components refers to the environment in the registry using the syntax blow, as you can see in train.yml:

$schema: https://azuremlschemas.azureedge.net/latest/commandComponent.schema.json

name: component_registry_train

version: 1

type: command

...

environment: azureml://registries/registry-demo/environments/environment-registry/versions/1

...

I can verify that these assets are registered in my registry by accessing the registry in the studio as I explained earlier, and then clicking on “Components” or “Environments” to see the list of registered assets.

For example, this is what I see when I click on “Components”:

Training and deploying in the current workspace

Next I want to train a model and deploy it in my workspace, using the environment and components I just added to the registry. I don’t need to create them again in my workspace because I have access to the registry — I can just reuse them! That’s really the point of using registries.

Let’s start by creating the compute cluster, copying the data to the cloud, and kicking off a pipeline job:

az ml compute create -f cluster-cpu.yml

az ml data create -f data.yml

run_id=$(az ml job create --file pipeline-job.yml --query name -o tsv)

My pipeline uses the two components in the registry, by referring to them with the following syntax:

$schema: https://azuremlschemas.azureedge.net/latest/pipelineJob.schema.json

type: pipeline

experiment_name: aml_registry

...

jobs:

train:

type: command

component: azureml://registries/registry-demo/components/component_registry_train/versions/1

...

test:

type: command

component: azureml://registries/registry-demo/components/component_registry_test/versions/1

...

This syntax should look familiar — it’s pretty similar to the syntax you saw earlier to refer to the environment in the registry. I can now go back to the studio, click on “Workspaces,” click on my workspace name, and then check that my job is running under the “Jobs” section. Once the job has completed, I can register the trained model in my workspace with the following command:

az ml model create --name model-registry --path "azureml://jobs/$run_id/outputs/model_dir" --type mlflow_model

I can then deploy the job in the current workspace with the following commands:

az ml online-endpoint create -f endpoint-workspace.yml

az ml online-deployment create -f deployment-workspace.yml --all-traffic

I won’t go into detail about these YAML configuration files because I’ve covered them in depth in my post on managed endpoints and deployments. Once these resources are created, I can invoke the endpoint with this command:

az ml online-endpoint invoke --name endpoint-registry-workspace --request-file ../test_data/images_azureml.json

Here’s the result I get from the invocation:

"[{\"0\": -4.727311611175537, \"1\": -4.995536804199219, \"2\": -2.837876558303833, \"3\": -4.04545783996582, \"4\": -2.488375186920166, \"5\": 4.3667402267456055, \"6\": -1.129504680633545, \"7\": 5.397668361663818, \"8\": 1.4192906618118286, \"9\": 7.125359535217285}, {\"0\": 1.0292633771896362, \"1\": -2.8376171588897705, \"2\": 10.29583740234375, \"3\": -0.8355491161346436, \"4\": 6.236623764038086, \"5\": -6.159229278564453, \"6\": 8.195236206054688, \"7\": -7.478643417358398, \"8\": 1.1837413311004639, \"9\": -7.222011089324951}]"

If you’d like to understand what this result means in the context of Fashion MNIST, you can read my blog post on training a model using the Fashion MNIST dataset and PyTorch.

Great! I now have an endpoint in my workspace, using the components and environment in my registry! Since Seth has read-access to the registry, he can use those assets in the exact same way.

Copying the model to the registry and deploying it

I mentioned to Seth that I trained a model using the assets he wanted me to share with him, and he’s now also interested in using my model. Thankfully I can easily copy the model from my workspace to the registry, without even downloading it to my machine! Here’s the command I use:

az ml model create --registry-name registry-demo --path azureml://models/model-registry/versions/1 --type mlflow_model

This command specifies that I would like the model in path “azureml://models/model-registry/versions/1” (which refers to my workspace) to be copied to the registry named “registry-demo,” and that it should be registered as an MLflow model.

We can verify that it was created by seeing that it’s listed under the “Models” section of the registry’s page. Now both Seth and I can create an endpoint for the registry model with the following commands:

az ml online-endpoint create -f endpoint-registry.yml

az ml online-deployment create -f deployment-registry.yml --all-traffic

The only difference between deploying a model in the workspace and in the registry is really just the path to the model in the YAML configuration file. Here’s deployment-registry.yml, the YAML file for our registry’s deployment:

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineDeployment.schema.json

name: blue

endpoint_name: endpoint-registry

model: azureml://registries/registry-demo/models/model-registry/versions/1

...

And as a comparison, here’s deployment-workspace.yml, the equivalent file for a workspace deployment:

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineDeployment.schema.json

name: blue

endpoint_name: endpoint-registry-workspace

model: azureml:model-registry@latest

...

Once the deployment is finalized, you can invoke the endpoint as usual:

az ml online-endpoint invoke --name endpoint-registry --request-file ../test_data/images_azureml.json

This should give you a prediction similar to the one you got before.

Deleting the endpoints

Before you set this project aside, I recommend that you delete the endpoints you created, to avoid unnecessary charges:

az ml online-endpoint delete --name endpoint-registry-workspace -y

az ml online-endpoint delete --name endpoint-registry -y

The “azureml” public registry

You’ve seen how you can create a custom registry and add assets to it. It turns out that the Azure ML team has created a public registry named “azureml,” where you can find additional assets that are useful to all. For example, you’ll find there the following components that make Responsible AI much easier to use:

Before registries, if you wanted to work with Responsible AI, you would need to install these components by running a separate script. Now with registries, we can skip that step! You can refer to these components from within your project by following the exact same techniques I showed in this post.

The team plans to keep adding new assets to this registry, so stay tuned!

Conclusion

In this post, you learned how to create a custom registry, how to add environments, components, and models to it, and how to use those assets in your project. Incorporating registries in your workflow will enable you to better share your work with your coworkers, and to reuse code that has been written by others. This is sure to increase your productivity! I hope that you’ll give this new Azure ML feature a try.

Thank you to Manoj Bableshwar from the Azure ML team at Microsoft for reviewing the content in this post.